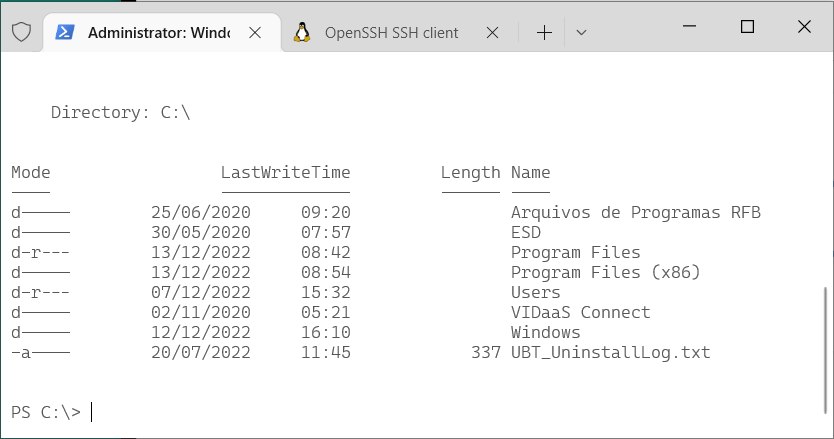

Command line on Windows (10+) nowadays doesn’t have to be only PuTTY to a remote Linux machine. In fact many Linux concepts were incorporated on Windows.

Windows Subsystem for Linux

First, activate WSL. Since I enjoy using Fedora, and not Ubuntu, this guide by Jonathan Bowman has helped me to set WSL exactly as I like. The guide points to some old Fedora images, so pay attention to its links to get a newer one. Then, the guide also explains how to initialize the Fedora image, customize it as default, configure your user etc.

Windows native SSH clients

Yes, it has tools from OpenSSH, such as the plain ssh client, ssh-agent and others. No need for PuTTY.

This guide by Chris Hastie explains how to activate SSH Agent with your private key. I’m not sure it is fairly complete, since I didn’t test yet if it adds your key in session startup for a complete password-less experience. I’m still trying.

Basically, you need to activate a Windows service and have your private key in $HOME\.ssh\id_rsa, exactly like under Linux.

Windows Terminal

The old command prompt is very limited, as we know, and obsolete. Luckily, Microsoft has released a new, much improved, Terminal application that can be installed from the Store. On Windows 11, the Terminal app is already there for you.

It allows defining sessions with custom commands as wsl (to get into the Fedora WSL container installed above), cmd, ssh. I use tmux in all Linux computers that I connect, so my default access command is:

ssh -l USERNAME -A -t HOSTNAME "tmux new-session -s default -n default -P -A -D"

Windows Terminal app is highly customizable, with colors and icons. And this repo by Mark Badolato contains a great number of terminal color schemes. Select a few from the windowsterminal folder and paste their JSON snippet into the file %HOME%\AppData\Local\Packages\Microsoft.WindowsTerminal_8wekyb3d8bbwe\LocalState\settings.json.