No grupo de bairro chamado Cecilias e Buarques, alguém pediu dicas de coisas para fazer por perto, que não seja comer, pois estava de regime. Mandei as dicas abaixo.

Sala São Paulo, todo domingo 11h tem concertos gratuitos e muito convidativos. Chegue antes para pegar ingresso, sempre tem lugar, nunca lota. Programação aqui. Tem algumas pessoas em condição de rua no entorno, que deixam a região meio sinistra, mas não incomodam ninguém e não apresentam perigo nenhum.

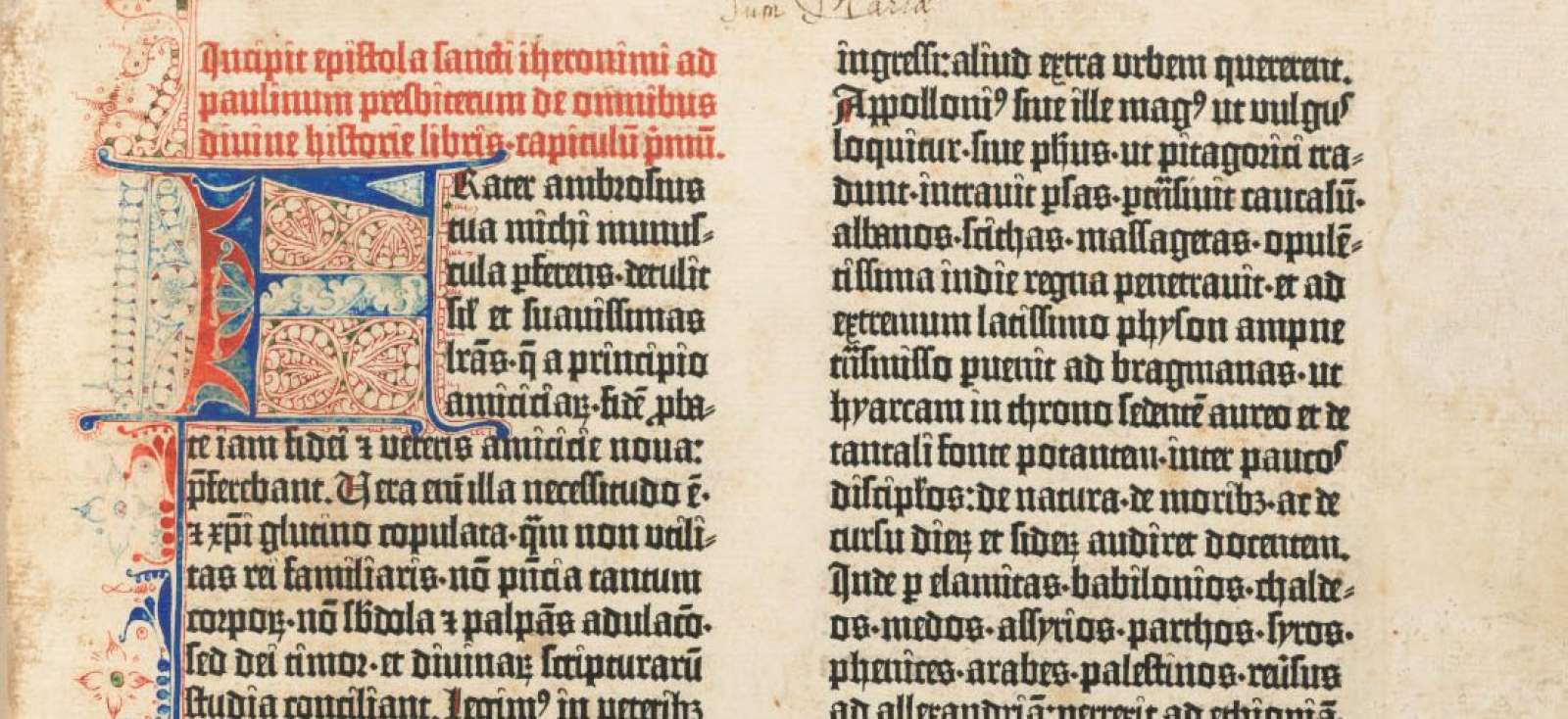

Ao lado, tem o incrível Museu da Língua Portuguesa.

Uns quarteirões de lá, tem a Pinacoteca e o Jardim da Luz. Ambos imperdíveis e com a vocação para esculturas ao ar livre.

Todos esses museus, aos sábados têm entrada franca.

E já que estará próximo do Bom Retiro, as ruas lá são muito legais de se visitar, especialmente quando as inúmeras lojas de roupas estão abertas. É um bairro histórico e importante para a memória da imigração do Brasil. Tem lá também muitos restaurantes, cafeterias e sorveterias coreanas e judaicas que valem a visita.

Quando voltar a comer, vá ao Komah, restaurante coreano sofisticado, mas não muito caro, na Barra Funda. É fora de série.

Caminhe até o Largo do Arouche e Praça da República. Neste último, há anos, aos domingos 11h, tem uma roda de capoeira bonita e muito impressionante. Na Praça Dom José Gaspar tem uma galeria que às vezes abriga, em todos os seus andares, feira de artesãos de jóias, marchetaria, moda, velas etc. Informe-se sobre datas.

Essa região toda da República foi urbanizada de forma maravilhosa e você deve contemplar ali os prédios e como eles devolvem vitalidade à cidade. É uma pena que a região passa agora por um período de decadência (que já vai terminar).

Ainda na Praça da República, o Ester Rooftop é restaurante que você deve visitar. Contemple seu edifício Deco. Contemple a vista alta. Tem dias da semana que tem trio de jazz tocando lá dentro. De 3ª a sábado tem música ao vivo e cobrem vários estilos.

Ainda na República, no trajeto até o Theatro Municipal (que você também deve visitar e ir a espetáculos), mas antes de chegar lá, tem o SESC 24 de Maio, lindo, maravilhoso, adoro. Se você for sócio, tem uma piscina incrível na cobertura para usufruir. Fora a abundante programação cultural com teatro, exposições e atividades interativas.

Outro SESC é o Consolação, próximo ao Mackenzie. Ali toda 3ª 19h, há muitas décadas, tem shows importantíssimos de música instrumental brasileira, palco que dá espaço para artistas de projeção internacional mas que são menos conhecidos que as Anitas e Pablos do jabá. Esse projeto, Instrumental SESC, é gratuito e os shows são transmitidos ao vivo também em seu canal do YouTube, abarrotado de registros históricos.

Chegue até os calçadões do Centro e visite as inúmeras exposições do Farol Santander (Edifício Altino Arantes, edifício do Banespa), inclusive o Bar do Cofre no subsolo. Nessa Praça Antônio Prado há mais o que se ver, como o monumento a Zumbi dos Palmares. Neste mesmo passeio contemple a arquitetura Deco e Nouveau desses prédios históricos. Dê também um pulo ao Centro Cultural Banco do Brasil que vale pelo edifício e também pelas itinerantes exposições. Tem também a Caixa Cultural ali perto com exposições.

A Praça Ramos de Azevedo tem em seu entorno o Theatro Municipal, que tem visitas guiadas muito interessantes de dia, fora a programação cultural. Tem também o belíssimo edifício Matarazzo que hoje abriga a prefeitura da cidade, cuja cobertura creio que é aberta para visitação. E também o Shopping Light, com tours guiados e com um bar charmoso na cobertura.

O roteiro religioso inclui corais e atrações no Mosteiro de São Bento e na Catedral da Sé. Geralmente aos domingos, horário da missa. Informe-se antes.

A todos esses lugares você pode e deve ir a pé ou aproveitar ônibus gratuito aos domingos.

Também no meu Facebook.